This weekend, I was invited to participate in Rhizome’s Seven on Seven in NYC — an event that pairs seven artists with seven technologists, challenging them to create something in one day and present it to an audience the next day.

The other teams were a humbling roster of creative geeks, including Ricardo “mrdoob” Cabello, Ben Cerveny, Jeri Ellsworth, Zach Lieberman, Kellan Elliott-McRea, Chris “moot” Poole, Bre Pettis, and Erica Sadun.

I was paired with Michael Bell-Smith, whose digital art I’d admired and linked to in the past. It was a perfect match, and we’re very happy to announce the result of our collaboration: Supercut.org (warning: NSFW audio).

Supercut.org is an automatic supercut composed entirely out of other supercuts, combined with a way to randomly shuffle through all of the supercut sources.

The Idea

When we first started work on Friday morning, Michael and I started brainstorming what we wanted to accomplish: something visual, high-concept (i.e. explainable in a tweet), and hopefully with a sense of humor.

We quickly realized that our interest in supercuts was fertile ground. Michael’s work often touches on structural re-edits and remixes, such as Oonce-Oonce, Battleship Potemkin: Dance Edit, Chapters 1-12 of R. Kelly’s Trapped in the Closet Synced and Played Simultaneously, and his mashup album mixing pop vocals over their ringtone versions.

Both of us were fascinated by this form of Internet folk art. Every supercut is a labor of love. Making one is incredibly time-consuming, taking days or weeks to compile and edit a single video. Most are created by pop culture fans, but they’ve also been used for film criticism and political commentary. It’s a natural byproduct of remix culture: people using sampling to convey a single message, made possible by the ready availability of online video and cheap editing software.

So, supercuts. But what? Making a single supercut seemed cheap. I first suggested making a visual index of supercuts, or a visualization of every clip.

But Michael had a better idea — going meta. We were going to build a SUPERSUPERCUT, a supercut composed entirely out of other supercuts. And, if we had time, we’d make a dedicated supercut index.

Making the SuperSupercut

There were three big parts: index every supercut in a database, download all the supercuts locally, break each video into its original shots, and stitch the clips back together randomly.

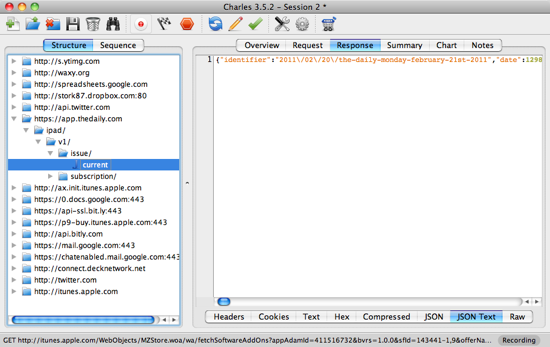

Using my blog post as a guide, Michael added every supercut to a new table in MySQL, along with a title, description, and a category. While Michael did that, I wrote a simple script that used youtube-dl to pull the video files from YouTube and store them locally.

To split the clips, we needed to find a way to do scene detection — identifying cuts in a video by looking at movement between two consecutive frames. But we needed a way to do it from my Linux server, which ruled out everything with a GUI.

After some research, I found a very promising lead in an obscure, poorly-maintained French Linux utility called shotdetect. It did exactly what we needed — analyze a video and return an XML file of scene start times and durations.

The most challenging part of the entire day, by far, was simply getting shotdetect to compile. The package only had binaries for Debian and the source hadn’t been updated since September 2009. Since then, the video libraries it needed have changed dramatically, and shotdetect wouldn’t compile without locating them.

Three frustrating hours later, we called in the help of one of the beardiest geeks I know, Daniel Ceregatti, an old friend and coworker. After 20 minutes of hacking the C++ source, we were up and running.

With the timecodes and durations from shotdetect, we used ffmpeg to split each supercuts into hundreds of smaller MPEG-2 videos, all normalized to the same 640×480 dimensions with AAC audio. The results weren’t perfect — many scenes were broken during dialogue because of camera changes — but it was good enough.

As ffmpeg worked, I stored info about each newly-generated clip in MySQL. From there, it was simple to generate a random ten-minute playlist of clips between a half-second to three seconds in length.

With that list, we used the Unix `cat` utility to concatenate all the videos together into a finished supersupercut. We tweaked the results after some early tests, which you can see on YouTube.

While the videos processed overnight, we registered the domain, built the rest of the website, and designed our slides for Saturday’s event — taking time out for wonderful Korean food with the fellow team of Ben Cerveny and Liz Magic-Laser. I finally got to sleep at 5:30am, but I’m thrilled with the results.

The Future

There were several things we talked about, but simply didn’t have time to do.

I’m planning on using the launch of Supercut.org to finally retire my old supercut list by adding a way to browse and sort the entire index of supercuts by date, source, and genre. Most importantly, I’m going to add a way for anyone to submit their own supercuts to the index.

And of course, when any supercut is added, it will automatically become part of the randomized supersupercut on the homepage: an evolving tribute to this unique art form.